OpenLuup unavailable

-

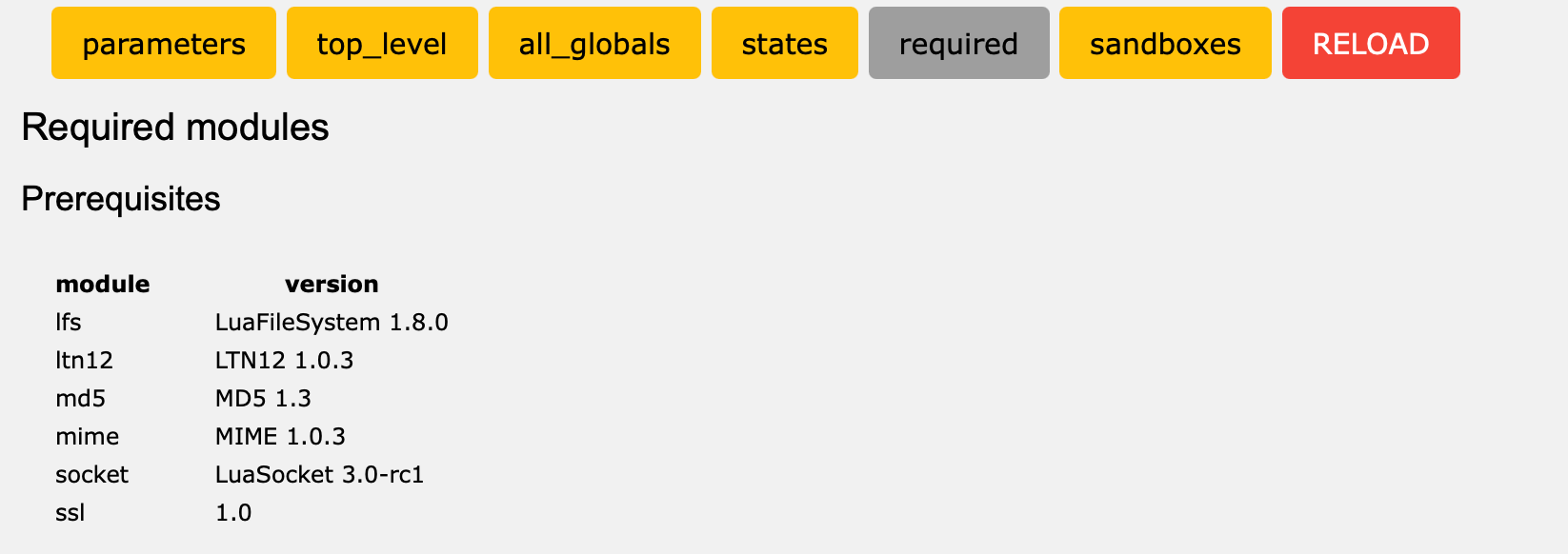

The latest development version v21.3.24 has the extra socket send check in place for every send done on an openLuup server (HTTP, MQTT, SMTP, POP3.)

Let's see if that helps anything.

-

OK, that was clearly a BAD IDEA.

2021-03-24 14:49:58.979 openLuup.server:: GET /J_ALTUI_verabox.js HTTP/1.1 tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 2 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 6 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 97222 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 2 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.983 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 5 bytes to tcp{client}: 0x1cb6160I've reverted that commit, so simply updating to development again (from the console!) should fix that.

-

OK, that was clearly a BAD IDEA.

2021-03-24 14:49:58.979 openLuup.server:: GET /J_ALTUI_verabox.js HTTP/1.1 tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 2 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 6 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 97222 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.982 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 2 bytes to tcp{client}: 0x1cb6160 2021-03-24 14:49:58.983 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x1cb6160' sending 5 bytes to tcp{client}: 0x1cb6160I've reverted that commit, so simply updating to development again (from the console!) should fix that.

-

I've pushed a new v21.3.24b release which includes the new checks, but, hopefully, without the deleterious effect on AltUI (or anything else.)

To make it easy to revert, I've also tagged the v21.3.23 version, so you can always revert to that by typing it into the update box.

-

I've pushed a new v21.3.24b release which includes the new checks, but, hopefully, without the deleterious effect on AltUI (or anything else.)

To make it easy to revert, I've also tagged the v21.3.23 version, so you can always revert to that by typing it into the update box.

Have not upgraded to 24b yet but this is what I am seeing:

2021-03-24 12:36:01.348 openLuup.io.server:: HTTP:3480 connection from 192.168.0.55 tcp{client}: 0x7fb153b396c8 2021-03-24 12:36:01.350 openLuup.server:: GET /data_request?id=variableset&serviceId=urn:upnp-org:serviceId:VSwitch1&Variable=Text2&DeviceNum=22&Value=10 HTTP/1.1 tcp{client}: 0x7fb153b396c8 2021-03-24 12:36:01.350 luup.variable_set:: 22.urn:upnp-org:serviceId:VSwitch1.Text2 was: 9 now: 10 #hooks:0 2021-03-24 12:36:01.351 openLuup.server:: error 'closed' sending 2 bytes to tcp{client}: 0x7fb153b396c8 2021-03-24 12:36:01.351 openLuup.server:: ...only 0 bytes sent 2021-03-24 12:36:01.351 openLuup.server:: error 'closed' sending 5 bytes to tcp{client}: 0x7fb153b396c8 2021-03-24 12:36:01.351 openLuup.server:: ...only 0 bytes sent 2021-03-24 12:36:01.351 openLuup.server:: request completed (2 bytes, 1 chunks, 1 ms) tcp{client}: 0x7fb153b396c8 2021-03-24 12:36:01.351 openLuup.io.server:: HTTP:3480 connection closed openLuup.server.receive closed tcp{client}: 0x7fb153b396c8I am nearly 100% convinced that the hangs are due to luasocket error or timeout handling. You can see here that likely the client which here is home-assistant sending an http call to update a device on openLuup, apparently closed the tcp connection to which openLuup is trying to send something. As a result, I am getting an error. The humor is that both home assistant and openLuup are on the same machine. I am seeing this type of errors a few times a day but have not had a hang up since my previous post (I have updated luasocket and luasec since by the way) so I don't know if they are related to this error. However my previous experience from last year seem to indicate a bug in luasocket causing it to hold up the entire lua thread.

-

I've pushed a new v21.3.24b release which includes the new checks, but, hopefully, without the deleterious effect on AltUI (or anything else.)

To make it easy to revert, I've also tagged the v21.3.23 version, so you can always revert to that by typing it into the update box.

@akbooer Upgraded to v21.2.24b, it works as supposed so far.

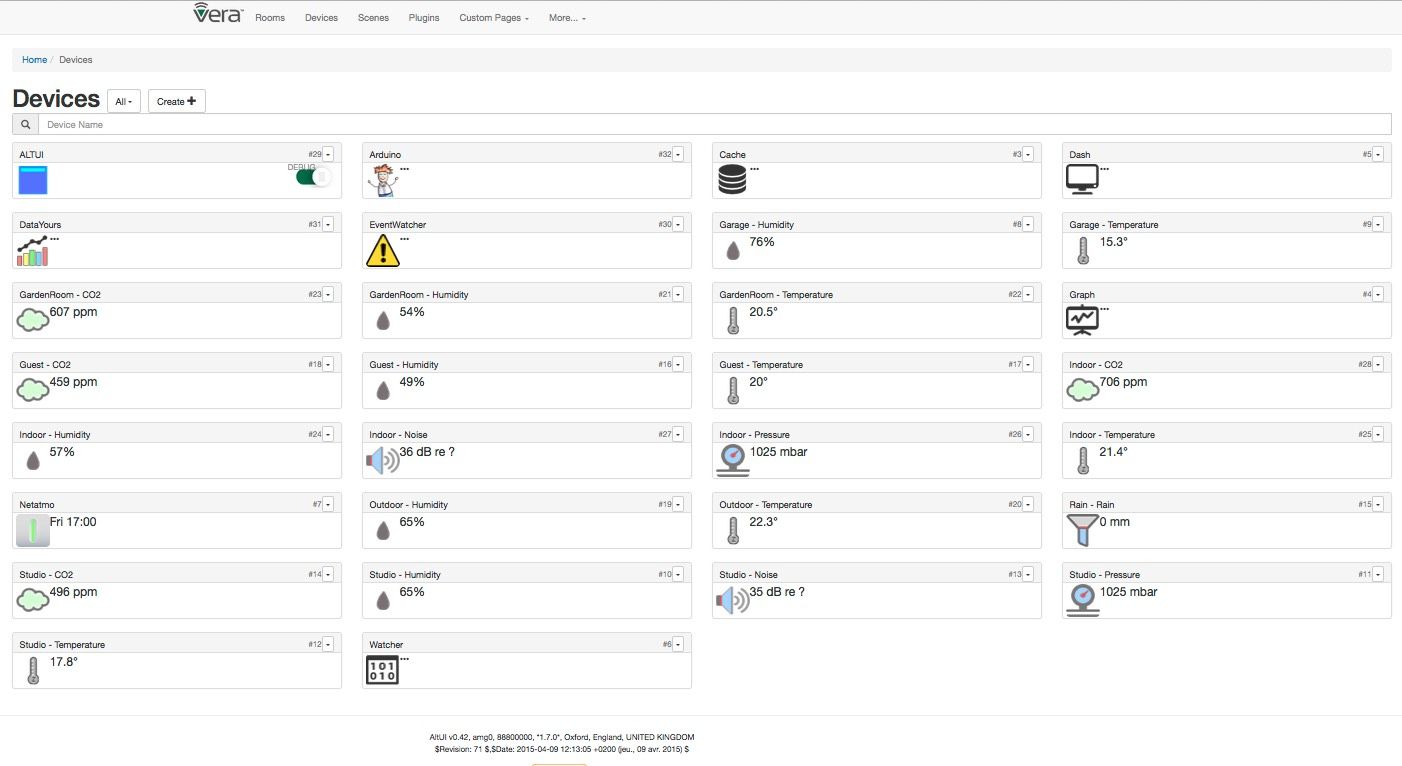

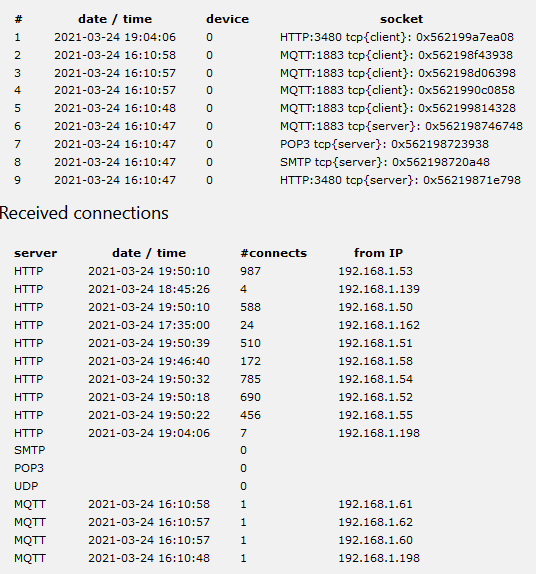

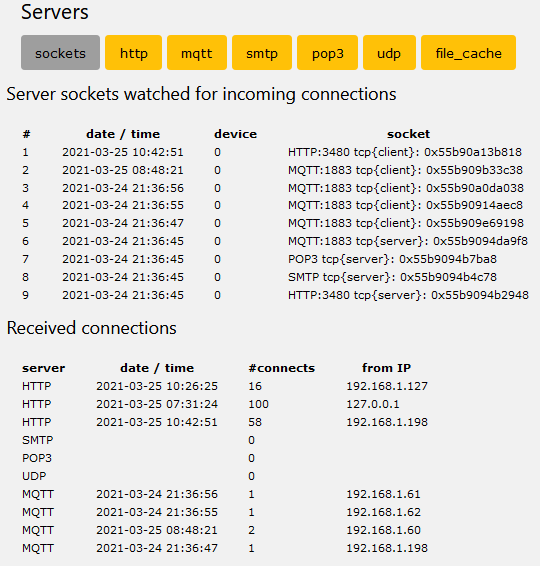

Also I modified my strategy a bit. I have now turned off the http reporting from the sensors. They generate quite a lot of http calls, the screenshot below is from approx 13:00 to 22:00.

Worth noting is that they have been doing this http reporting for a few months without any stability problems, the traffic they generate could however be part of seeing the problems on my particular server perhaps.

The three test devices reporting over Mqtt are left on, we will see what happens.

-

@akbooer Upgraded to v21.2.24b, it works as supposed so far.

Also I modified my strategy a bit. I have now turned off the http reporting from the sensors. They generate quite a lot of http calls, the screenshot below is from approx 13:00 to 22:00.

Worth noting is that they have been doing this http reporting for a few months without any stability problems, the traffic they generate could however be part of seeing the problems on my particular server perhaps.

The three test devices reporting over Mqtt are left on, we will see what happens.

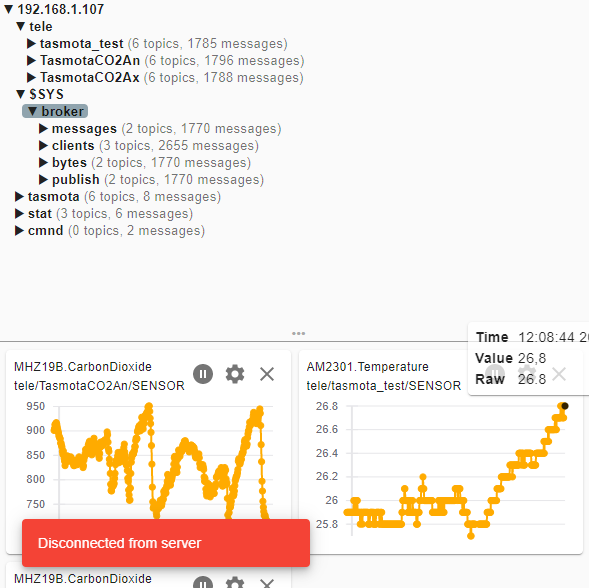

Today at 12:08 it crashed again.

Not many Mqtt calls since the restart yesterday evening.

Also very few http server sockets:

Log file errors before the crash:

.. 2021-03-25 12:05:33.879 openLuup.io.server:: MQTT:1883 connection closed tcp{client}: 0x563ca4502428 2021-03-25 12:05:33.879 openLuup.mqtt:: RECEIVE ERROR: closed tcp{client}: 0x563ca4502428 2021-03-25 12:05:33.879 openLuup.mqtt:: TasmotaCO2An UNSUBSCRIBE from cmnd/TasmotaCO2An_fb/# tcp{client}: 0x563ca4502428 2021-03-25 12:05:33.879 openLuup.mqtt:: TasmotaCO2An UNSUBSCRIBE from cmnd/tasmotas/# tcp{client}: 0x563ca4502428 2021-03-25 12:05:33.879 openLuup.mqtt:: TasmotaCO2An UNSUBSCRIBE from cmnd/TasmotaCO2An/# tcp{client}: 0x563ca4502428 2021-03-25 12:05:33.891 openLuup.mqtt:: TasmotaCO2An SUBSCRIBE to cmnd/TasmotaCO2An/# tcp{client}: 0x563ca41ea188 2021-03-25 12:05:33.894 openLuup.mqtt:: TasmotaCO2An SUBSCRIBE to cmnd/tasmotas/# tcp{client}: 0x563ca41ea188 .. 2021-03-25 12:08:44.921 openLuup.io.server:: MQTT:1883 connection closed tcp{client}: 0x563ca41ea188 2021-03-25 12:08:44.921 openLuup.mqtt:: RECEIVE ERROR: closed tcp{client}: 0x563ca41ea188 2021-03-25 12:08:44.921 openLuup.mqtt:: TasmotaCO2An UNSUBSCRIBE from cmnd/TasmotaCO2An_fb/# tcp{client}: 0x563ca41ea188 2021-03-25 12:08:44.921 openLuup.mqtt:: TasmotaCO2An UNSUBSCRIBE from cmnd/tasmotas/# tcp{client}: 0x563ca41ea188 2021-03-25 12:08:44.921 openLuup.mqtt:: TasmotaCO2An UNSUBSCRIBE from cmnd/TasmotaCO2An/# tcp{client}: 0x563ca41ea188 2021-03-25 12:08:44.925 openLuup.server:: request completed (11424 bytes, 1 chunks, 3813 ms) tcp{client}: 0x563ca3ebc488 .. 2021-03-25 12:08:44.935 openLuup.io.server:: MQTT:1883 connection closed tcp{client}: 0x563ca3b9d058 2021-03-25 12:08:44.935 openLuup.mqtt:: RECEIVE ERROR: closed tcp{client}: 0x563ca3b9d058 2021-03-25 12:08:44.935 openLuup.io.server:: MQTT:1883 connection closed tcp{client}: 0x563ca3f8a488 2021-03-25 12:08:44.935 openLuup.mqtt:: RECEIVE ERROR: closed tcp{client}: 0x563ca3f8a488 ... 2021-03-25 12:08:44.938 openLuup.io.server:: MQTT:1883 connection closed tcp{client}: 0x563ca47549f8 2021-03-25 12:08:44.938 openLuup.mqtt:: closed tcp{client}: 0x563ca47549f8 2021-03-25 12:08:44.938 openLuup.mqtt:: tasmota_test UNSUBSCRIBE from cmnd/tasmota_test_fb/# tcp{client}: 0x563ca47549f8 2021-03-25 12:08:44.938 openLuup.mqtt:: tasmota_test UNSUBSCRIBE from cmnd/tasmota_test/# tcp{client}: 0x563ca47549f8 2021-03-25 12:08:44.938 openLuup.mqtt:: tasmota_test UNSUBSCRIBE from cmnd/tasmotas/# tcp{client}: 0x563ca47549f8 2021-03-25 12:08:44.938 openLuup.mqtt:: ERROR publishing application message for mqtt:cmnd/tasmota_test/POWER : closed 2021-03-25 12:08:44.939 openLuup.io.server:: MQTT:1883 connection closed tcp{client}: 0x563ca428dc98 2021-03-25 12:08:44.939 openLuup.mqtt:: closed tcp{client}: 0x563ca428dc98 2021-03-25 12:08:44.939 openLuup.mqtt:: TasmotaCO2Ax UNSUBSCRIBE from cmnd/TasmotaCO2Ax/# tcp{client}: 0x563ca428dc98 2021-03-25 12:08:44.939 openLuup.mqtt:: TasmotaCO2Ax UNSUBSCRIBE from cmnd/tasmotas/# tcp{client}: 0x563ca428dc98 2021-03-25 12:08:44.939 openLuup.mqtt:: TasmotaCO2Ax UNSUBSCRIBE from cmnd/TasmotaCO2Ax_fb/# tcp{client}: 0x563ca428dc98 2021-03-25 12:08:44.939 openLuup.mqtt:: ERROR publishing application message for mqtt:cmnd/TasmotaCO2Ax/POWER : closed -

The log sequences for MQTT seem normal, simply denoting that a connection has been dropped by the client, so then it's unsubscribed. A while later, presumably in the bits you've edited out, it reconnects. Might be interesting to know if there were any successful transactions going on in between. What's the KeepAlive configured as on your clients?

But I'm thinking this is not a very fruitful way forward. I am really, really struggling to think what may have changed, other than the fact that there is simply more I/O going on in the system. I tend to agree with @rafale77 above.

I have not fully run out of options with the LuaSocket library, so there will be a bit more experimentation. I'm hoping that a eureka moment will strike and I realize that this is something stupid that I've done (wouldn't be the first time.)

-

Just loaded 3.25b and will continue to test. I have not had any other crash myself but again, I have not been using the MQTT server on my "production" system. I continue to suspect as I had evidence of last year that the lua thread hangs when luasocket tries to send too many packets into a closed connections and certainly the MQTT broker running in the same lua instance as openLuup increases that probability. For me it was homewave making http polling calls which suddenly went unanswered. Maybe @ArcherS, could try updating the luasocket version to the development version? It helped for me. Another idea might be to run the MQTT server on a separate lua instance? I am getting the idea from the UPnP proxy plugin which technically is really a separate server on another lua instance launched by the plugin device.

-

3.25b has yet more checking, so it's worth a go.

The thing about the openLuup MQTT implementation is that it is a server, not a client. What this means is that instead of messages going from:

client A --> PUBLISH over TCP --> server --> PUBLISH over TCP --> client BYou get:

If client A is running on openLuup

client A --> server --> PUBLISH over TCP --> client Bor if client B is runing on openLuup

client A --> PUBLISH over TCP --> server --> client Bor both under openLuup (less likely)

client A --> server --> client BThe conclusion is that splitting the server to a separate machine actually generates more TCP traffic, not less.

-

3.25b has yet more checking, so it's worth a go.

The thing about the openLuup MQTT implementation is that it is a server, not a client. What this means is that instead of messages going from:

client A --> PUBLISH over TCP --> server --> PUBLISH over TCP --> client BYou get:

If client A is running on openLuup

client A --> server --> PUBLISH over TCP --> client Bor if client B is runing on openLuup

client A --> PUBLISH over TCP --> server --> client Bor both under openLuup (less likely)

client A --> server --> client BThe conclusion is that splitting the server to a separate machine actually generates more TCP traffic, not less.

True, it generates more TCP traffic to the machine and overall but... it may reduce traffic within the openLuup/socket instance since there could be traffic not destined/subscribed to openLuup but maybe I misunderstood something...

edit: I am still seeing this packets sending into a closed connection error appearing occasionally on 25b so the keepalive may not help in my case given how short the time is.

-

Indeed, the keepalive shouldn't make much difference since MQTT has a ping/response transaction to ostensibly keep the channel open anyway. It was worh a try. On my Synology Docker system, I'm not seeing any dropouts like that at all. Once again, closed connections should be handled correctly and aren't, in themselves, necessarily sinister. It's not even totally clear that this is the reason to hang the system. Perplexing.

-

The log sequences for MQTT seem normal, simply denoting that a connection has been dropped by the client, so then it's unsubscribed. A while later, presumably in the bits you've edited out, it reconnects. Might be interesting to know if there were any successful transactions going on in between. What's the KeepAlive configured as on your clients?

But I'm thinking this is not a very fruitful way forward. I am really, really struggling to think what may have changed, other than the fact that there is simply more I/O going on in the system. I tend to agree with @rafale77 above.

I have not fully run out of options with the LuaSocket library, so there will be a bit more experimentation. I'm hoping that a eureka moment will strike and I realize that this is something stupid that I've done (wouldn't be the first time.)

@akbooer I think the KeepAlive is 15 seconds when I read up on Tasmota KeepAlive.

The connection from the devices to the server seem stable, I have seldom seen any dropouts in the device console anyway.Updated to v21.2.25b and restarted, we will se what happens!

Also enabledluup.attr_set ("openLuup.MQTT.PublishVariableUpdates", true), I had turned it off to bring down the load on messages. -

@akbooer I think the KeepAlive is 15 seconds when I read up on Tasmota KeepAlive.

The connection from the devices to the server seem stable, I have seldom seen any dropouts in the device console anyway.Updated to v21.2.25b and restarted, we will se what happens!

Also enabledluup.attr_set ("openLuup.MQTT.PublishVariableUpdates", true), I had turned it off to bring down the load on messages.I looked through the log files for errors, the red highlighting is very good to highlight the errors.

This one is Homewave (192.168.1.127) on my phone. I noticed it was slow to connect. No crash though. I had a few more similar errors in the log a bit later.

2021-03-25 20:39:31.205 openLuup.io.server:: HTTP:3480 connection from 192.168.1.127 tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.207 openLuup.server:: GET /data_request?output_format=json&id=lu_status HTTP/1.1 tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.531 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x557d7a41acf8' sending 2 bytes to tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.732 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x557d7a41acf8' sending 6 bytes to tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.925 openLuup.server:: error 'closed' sending 2 bytes to tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.925 openLuup.server:: ...only 0 bytes sent 2021-03-25 20:39:31.925 openLuup.server:: error 'closed' sending 6 bytes to tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.925 openLuup.server:: ...only 0 bytes sent 2021-03-25 20:39:31.925 openLuup.server:: error 'closed' sending 857193 bytes to tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.925 openLuup.server:: ...only 128000 bytes sent 2021-03-25 20:39:31.925 openLuup.server:: error 'closed' sending 2 bytes to tcp{client}: 0x557d7a41acf8 2021-03-25 20:39:31.925 openLuup.server:: ...only 0 bytes sent 2021-03-25 20:39:31.925 openLuup.server:: error 'closed' sending 5 bytes to tcp{client}: 0x557d7a41acf8A few Whisper errors, could be related to a Luup reload at that I made at that time:

2021-03-25 19:28:41.905 openLuup.context_switch:: ERROR: [dev #0] ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) 2021-03-25 19:28:41.905 openLuup.scheduler:: 55.urn:upnp-org:serviceId:TemperatureSensor1.CurrentTemperature ERROR ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) function: 0x557d78862090 2021-03-25 19:28:42.325 openLuup.context_switch:: ERROR: [dev #0] ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) 2021-03-25 19:28:42.325 openLuup.scheduler:: 246.urn:upnp-org:serviceId:TemperatureSensor1.CurrentTemperature ERROR ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) function: 0x557d78862090 2021-03-25 19:28:42.326 openLuup.context_switch:: ERROR: [dev #0] ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) 2021-03-25 19:28:42.326 openLuup.scheduler:: 247.urn:upnp-org:serviceId:TemperatureSensor1.CurrentTemperature ERROR ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) function: 0x557d78862090 2021-03-25 19:28:42.363 openLuup.context_switch:: ERROR: [dev #0] ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) 2021-03-25 19:28:42.363 openLuup.scheduler:: 11669.urn:upnp-org:serviceId:TemperatureSensor1.CurrentTemperature ERROR ./openLuup/whisper.lua:101: bad argument #1 to 'format' (number expected, got string) function: 0x557d78862090 2021-03-25 19:28:42.758 luup_log:3: ALTUI: startupDeferred, called on behalf of device:3 -

The Homewave one is interesting. Might just be a slow connection. I'll make the timeout for socket.select() larger (probably user-configurable.) So HomeWave works OK anyway?

Ah, very interesting. I know what the second one is. Brand new variables are (in Vera, hence openLuup too) given the default value of EMPTY. These messages come from the HIstorian (expecting a numeric value) which you have enabled?? I suspect that they will go away after the first update (so after the system has been running a while, so long as the actual device is working.)

-

The homewave errors are the same as my occasional home assistant errors. My homewave is nowhere slow and was the initial source of my openLuup hangs. I just retested it with a transition between cellular+VPN to wifi and while I used to be able to cause openLuup to crash nearly 100% of the time doing that, I am no longer able to do it. Last year, the big factor was my upgrade of luasocket to the current github master branch which although it reports as 3.0rc1 is actually scm-2. This made a night and day difference and made even homewave feel significantly more responsive. I have since also moved to running openLuup to luajit with rapidjson which also is another performance improvement. It is possible that I accidentally downgraded my luasocket while troubleshooting something else at some point in the past few months which lead to the 2 crashes a couple of days ago. I have since been unable to crash openLuup again. Just a data point... I am still seeing these errors in the log but again, no crash.

This is the error I am seeing when I am switching network connection on homewave while it is polling openLuup:

2021-03-25 13:59:40.533 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x7f3a6d48d9d8' sending 2 bytes to tcp{client}: 0x7f3a6d48d9d8 2021-03-25 13:59:46.651 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x7f3a6d6c5520' sending 2 bytes to tcp{client}: 0x7f3a6d6c5520 2021-03-25 13:59:47.192 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x7f3a6d6c5520' sending 2 bytes to tcp{client}: 0x7f3a6d6c5520 2021-03-25 13:59:47.392 openLuup.server:: error 'socket.select() not ready to send tcp{client}: 0x7f3a6d6c5520' sending 6 bytes to tcp{client}: 0x7f3a6d6c5520 -

The Homewave one is interesting. Might just be a slow connection. I'll make the timeout for socket.select() larger (probably user-configurable.) So HomeWave works OK anyway?

Ah, very interesting. I know what the second one is. Brand new variables are (in Vera, hence openLuup too) given the default value of EMPTY. These messages come from the HIstorian (expecting a numeric value) which you have enabled?? I suspect that they will go away after the first update (so after the system has been running a while, so long as the actual device is working.)

@akbooer yes Homewave works as it should. At times it is a bit slow to connect, usually quite ok.

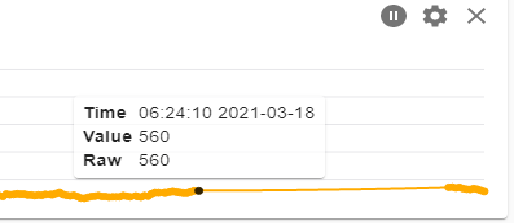

I have enabled Historian and have connected it to Grafana in which I have some graphs.

@rafale77 I tried to switch between cellular&vpn and wifi with Homewave and it seems to work without crashing OpenLuup. I am using Qvpn from Qnap.

-

@akbooer yes Homewave works as it should. At times it is a bit slow to connect, usually quite ok.

I have enabled Historian and have connected it to Grafana in which I have some graphs.

@rafale77 I tried to switch between cellular&vpn and wifi with Homewave and it seems to work without crashing OpenLuup. I am using Qvpn from Qnap.

Any update?

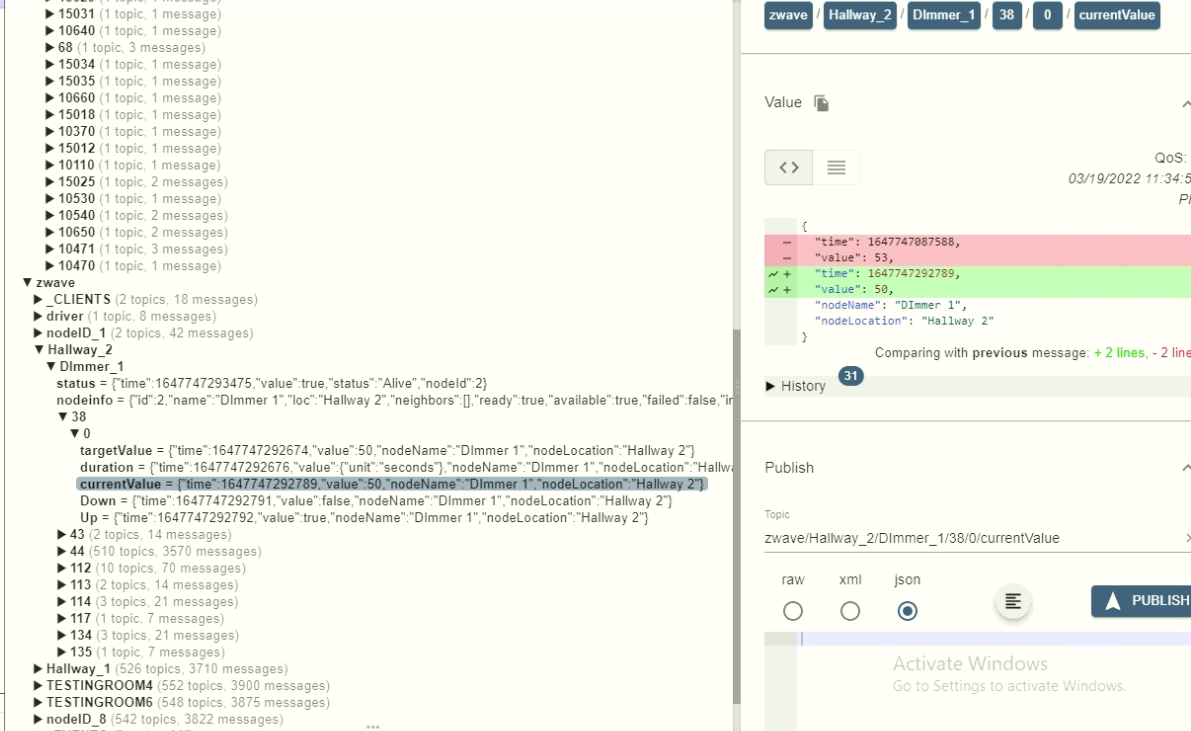

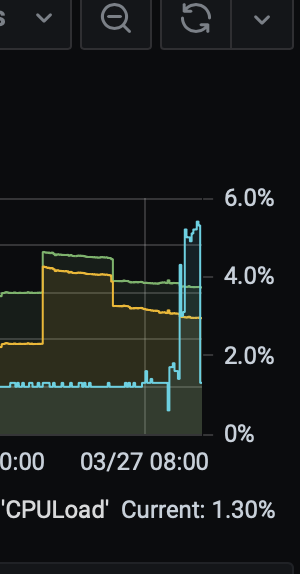

I just inadvertently stress tested openLuup's socket after all the updates this morning:

I went working in the yard cutting wood this morning which trips my ipcam motion sensor which then trips HomeAssistant object detection which then scans my camera stream using a deep learning model and when it detects a person sends an http call to openLuup to trip a security sensor on which I run a scene to do other things ranging from notifications to announcement depending on housemode etc... This happens at a rate of 5Hz (one call every 200ms)... You can see that the high frequency of http calls on top of the regular polling (I poll z-way at rate of 2Hz for example) made openLuup's CPU utilization jump! yet no crash...

Edit: And I checked the log to discover a lot more errors of sending bytes to a closed connection but as I said, it handled like a champ.

-

5% peak load? Shameful!

I’ve been stress testing too, and haven’t been able to make anything crash recently. I’m crafting a new Docker to take over from my main, and ageing, BeagleBone Black system, and the I/O there just whistles along with cpu at under 0.2%. I also see that RapidJson is available as a standard install of Alpine, so I may include that.

My conclusion, following yours, is that this is low-level LuaSocket issues on out of date libraries, which only show a problem when moderately stressed. I don’t think there’s anything more I can do at the openLuup coding level.

-

5% peak load? Shameful!

I’ve been stress testing too, and haven’t been able to make anything crash recently. I’m crafting a new Docker to take over from my main, and ageing, BeagleBone Black system, and the I/O there just whistles along with cpu at under 0.2%. I also see that RapidJson is available as a standard install of Alpine, so I may include that.

My conclusion, following yours, is that this is low-level LuaSocket issues on out of date libraries, which only show a problem when moderately stressed. I don’t think there’s anything more I can do at the openLuup coding level.

@akbooer said in OpenLuup unavailable:

5% peak load? Shameful!

I know! right? lol! As you know I optimize things to the edge... running on luajit, having turned JIT back on and rapidjson which I highly recommend over cjson because it enables not having to failover to dkjson if you so desire. I also regularly look at library updates which admittedly for lua, are sadly rare. I even tested a number of forks of luajit and found nothing worth the trouble at this point.