Status Board - What’s your HA Information Dashboard ?

-

https://www.google.co.uk/url?sa=i&url=https%3A%2F%2Fpanic.com%2Fblog%2Fpanic-status-board-2013-edition%2F&psig=AOvVaw1ERsyah34ZkmLnpWaYLgu-&ust=1600418906446000&source=images&cd=vfe&ved=0CAIQjRxqFwoTCODol5Xn7-sCFQAAAAAdAAAAABAE

https://www.google.co.uk/url?sa=i&url=https%3A%2F%2Fpanic.com%2Fblog%2Fpanic-status-board-2013-edition%2F&psig=AOvVaw1ERsyah34ZkmLnpWaYLgu-&ust=1600418906446000&source=images&cd=vfe&ved=0CAIQjRxqFwoTCODol5Xn7-sCFQAAAAAdAAAAABAEI’ve always liked the idea of having a screen, located somewhere in the house that would allow me to see the status of pretty much everything. (Hardware wise I’m just think of a basic Rasp Pi, fixed to a vesa mount, screwed to the back of an old monitor screen)

I’ve tried a number of tools/apps over the years, one of which was PanicBoard (where the above image comes from) - which seemed to have some potential, but the owners stopped developing/investing in that a while back.

What are people using ?

Is there something, perhaps a single tool/app that this community would collectively support/promote, one that no matter what HA you used, you could submit information to and have it displayed ?

**** Just to be clear, I’m referring to status/information boards, not a touch based, control board where you can turn things on/off etc..***

-

...the nice thing about Grafana, is that it can pull data directly from openLuup's Data Historian, which uses an industry-standard API (Graphite.)

-

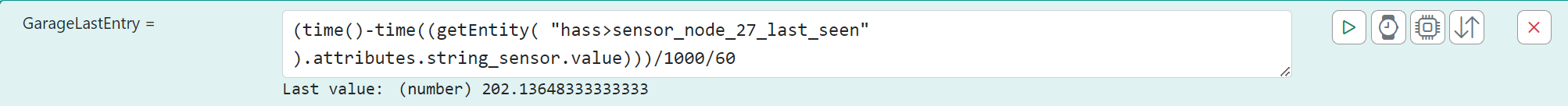

AK - have to say I've been a bit lazy on keeping up with openLuup's graphing ability (and reading the manual). I see I can graph virtually anything listed here: Console--> Historian-->Cache. There is also DataYours but currently I'm doing this (can't even remember how this works):

Unsure what's ancient technology or what each one entails eg AFAIK Grafana needs a Grafana server to be set up, etc. Presume that can be done on a RasPi.

What URL shows what you have shown above.(may be we need a new thread for openLuup graphing techniques?)

-

AK - have to say I've been a bit lazy on keeping up with openLuup's graphing ability (and reading the manual). I see I can graph virtually anything listed here: Console--> Historian-->Cache. There is also DataYours but currently I'm doing this (can't even remember how this works):

Unsure what's ancient technology or what each one entails eg AFAIK Grafana needs a Grafana server to be set up, etc. Presume that can be done on a RasPi.

What URL shows what you have shown above.(may be we need a new thread for openLuup graphing techniques?)

-

Does anyone here use an alternative to imperihome? the Imperihome bridge doesnt transfer all sensors for some reason.. motion sensors, light sensors, UV doesnt come over..

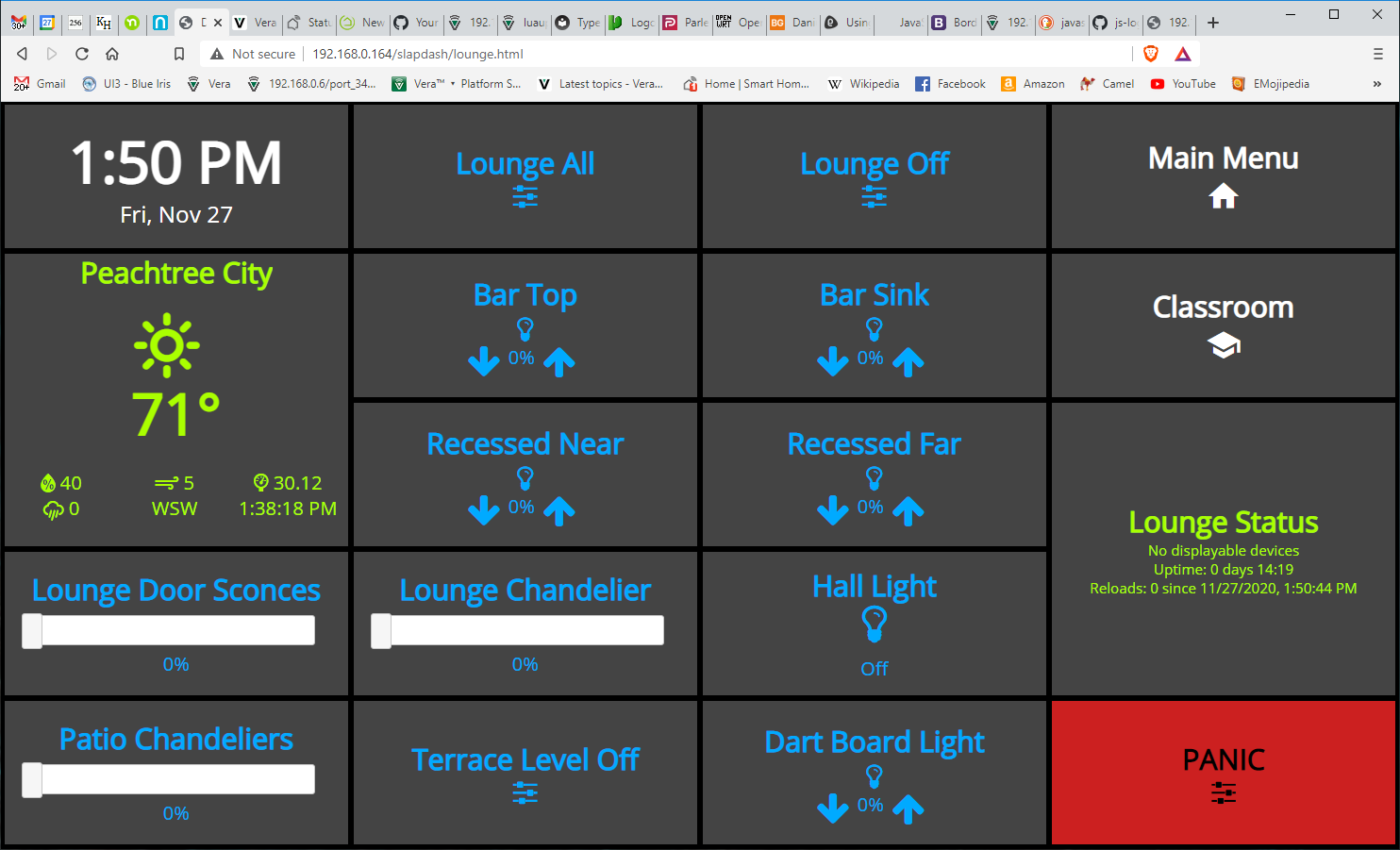

I want a status panel, but I want to be able to set i.e light schemes, open the garagedoor, etc from these panels.. (Old tab's and phones in 3d printed frames)

-

I've been looking at Home Remote, but it seems to need to connect to the vera servers for credentials, and I can't find another way to connect in openluup..

@perh Take a look at Homewave if you are on iOS. It works with Vera, both UI5 and UI7 and it also works for OpenLuup. You can have multiple controllers mapped seamlessly at the same time.

For Veras it works with cellular access, for OpenLuup you need a vpn to access the system when being off-site.//ArcherS

-

There is other visualisation software that will do what you want, but it comes with a high price tag...

Possibly the best professional system, IMHO is Eisbaur Scada (google is your friend), there are other professional systems available, it all comes down to price or user familiarity. -

@perh I ended with my own solution.

I searched a lot, but I couldn’t really find something ready. It’s obviously not generic, but I have thermostats, a/c, sensors and much more. It’s running on fully kiosk in fire tablets, that I control via mqtt/api, so I can display cams after motion events and much more.

-

I've also rolled my own. It will be included for optional use with the new multi-system Reactor.

-

I've also rolled my own. It will be included for optional use with the new multi-system Reactor.

@toggledbits said in Status Board - What’s your HA Information Dashboard ?:

I've also rolled my own. It will be included for optional use with the new multi-system Reactor.

Missed this.......tell us more.......

-

@toggledbits said in Status Board - What’s your HA Information Dashboard ?:

I've also rolled my own. It will be included for optional use with the new multi-system Reactor.

Missed this.......tell us more.......

@black-cat It's a skinnable tile interface that maps properties/capabilities to display controls (widgets). Each widget supports multiple canned layouts, and you can do custom layouts (either as per-device exceptions or globally-available). Widgets are tiled and moveable/sizeable. They are mapped to device properties and you can use expressions to fetch values, map values, etc. For example, the scene widget can be "active" based on the state of any device state or expression, not just the "active flag" on the scene itself--that is, a widget is not limited to sourcing data from one device/thing. So, for example, the thermostat widget can draw current temperature from an in-room multi-sensor, and on-off heating control by a plug-in switch, etc. Basically, instead of having to make a virtual device to collect data to a single object that is then displayed by a canned-appearance widget following rules particular to that device type, the widget just brings all the data together from whatever sources and displays it; actions work the same. If you want your thermostat in the bedroom to display the outdoor temperature in Moscow, you can do it. Easily. If you want a "binary sensor" widget to show tripped/alarm state when the pool pump is running after sunset, no problem. And you can do fun but sensible/expected things like when you activate a scene via a widget, the widget changes to the counter-scene (e.g. when you tap "Kitchen On" the lights come on and the widget then changes to "Kitchen Off"). Colors, fonts, sizes, etc. are all configurate/replaceable (CSS, HTML).

I've used and evolved this dashboard for years in my own home. It actually came into being first in 2017 when I made my first move away from Vera toward HomeAssistant (didn't happen, but that's another story). It was for family use, so right from the start, my idea was that nobody needs to know or care which controller is managing a device. Its hardware abstraction layer served as the launching point for a multi-system Reactor; I'll call it "MSR" here for brevity's sake. This MSR will work in a similar way: knowing the attributes and capabilities of a device, you can create rules using those, and rules that incorporate this data from multiple sources. That is, if your bedroom temperature is controlled by a space heater on a Tasmota-based relay board using control logic driven by input from a ZWay+openLuup-connected multi-sensor's temperature measurement, no problem. You configure any number of "controllers"; each instance announces what devices it has in inventory, and what attributes and capabilities they have. A controller can be an interface to Vera/openLuup, or Hass, or Hubitat, or just an HTTP-based element that fetches weather from OpenWeatherMap, or an interface to your EVL3/4-connect alarm panel, etc. It is an interface that simply says "these are the objects I have and this is what they know and do". So any device could be supported by a plugin in your Vera/openLuup/Hass/HE/other HA controller, or it could come from a dedicated controller crafted just for that device. For example, it currently supports Sonos through the Vera/openLuup plugin, but I (or someone) could write a dedicated Sonos controller that talks directly to the Sonos zones on the network and bypasses the plugin, maybe even uses their new API rather than UPnP. Controllers have a strictly defined behavior/contract, with the intention that others can develop controllers as well. This aspect is making MSR grow legs, a bit... it's really turning into a home automation controller all on its own. I foresee an ecosystem of available add-on controllers for every manner of device in future. This gives you the flexibility to determine what best supports the products you use; for example, if support for a particular Fibaro or Zooz device in Vera/eZLO is lacking/buggy (no--say it's not so!), you can instead include it on your openLuup+ZWay, Hass, or HE controller where the support is better, and MSR can find it there. But when creating your rules and activities, you don't have to know or care where that device lives. To the maximum extent possible, I am also keeping an architecture and implementation in which system objects (devices, groups, scenes, etc.) are entirely overridable and creatable through configuration. If you have a device type on Vera/openLuup that MSR doesn't natively support, you should be able to just go to configuration and say "this device type, or even this specific device, has these capabilities and these attributes". If a capability doesn't exist, you can create it locally immediately. Up and running in five minutes or less (modulo the first-time learning curve, of course). And for all of this, you should be able to contribute the configuration to the community if you wish (or find configurations/capabilities others have done and apply them). And of course, whatever to create/train is available both in the Reactor part and the Dashboard part.

I've focused mostly on the rules and reactions part of MSR for several weeks, and it lives and breathes now. Although algorithmically it shares ideas with Vera/openLuup Reactor, it is an entirely new code base (and not Lua). Huge strides have been made quickly, but of course, there are a lot of "TBD" comments in the code, and I'm sure no shortage of crashes in boundary conditions from things like unfinished input validation and so on. It needs combing out, some deep code reviews (which I prefer to do on paper), and backporting of some evolution of the evolved hardware abstraction to the Dashboard. There's plenty to do. But, with the freedom of creating the environment rather than working in someone else's, it's much faster and easier, and I'm really pleased with acceleration towards something usable over the last month. I'm about ready to cut over my own home's automations to it. There is nothing like the pressure of pleasing my "driving coach" to make sure I get things working well, and quickly.

-

@black-cat It's a skinnable tile interface that maps properties/capabilities to display controls (widgets). Each widget supports multiple canned layouts, and you can do custom layouts (either as per-device exceptions or globally-available). Widgets are tiled and moveable/sizeable. They are mapped to device properties and you can use expressions to fetch values, map values, etc. For example, the scene widget can be "active" based on the state of any device state or expression, not just the "active flag" on the scene itself--that is, a widget is not limited to sourcing data from one device/thing. So, for example, the thermostat widget can draw current temperature from an in-room multi-sensor, and on-off heating control by a plug-in switch, etc. Basically, instead of having to make a virtual device to collect data to a single object that is then displayed by a canned-appearance widget following rules particular to that device type, the widget just brings all the data together from whatever sources and displays it; actions work the same. If you want your thermostat in the bedroom to display the outdoor temperature in Moscow, you can do it. Easily. If you want a "binary sensor" widget to show tripped/alarm state when the pool pump is running after sunset, no problem. And you can do fun but sensible/expected things like when you activate a scene via a widget, the widget changes to the counter-scene (e.g. when you tap "Kitchen On" the lights come on and the widget then changes to "Kitchen Off"). Colors, fonts, sizes, etc. are all configurate/replaceable (CSS, HTML).

I've used and evolved this dashboard for years in my own home. It actually came into being first in 2017 when I made my first move away from Vera toward HomeAssistant (didn't happen, but that's another story). It was for family use, so right from the start, my idea was that nobody needs to know or care which controller is managing a device. Its hardware abstraction layer served as the launching point for a multi-system Reactor; I'll call it "MSR" here for brevity's sake. This MSR will work in a similar way: knowing the attributes and capabilities of a device, you can create rules using those, and rules that incorporate this data from multiple sources. That is, if your bedroom temperature is controlled by a space heater on a Tasmota-based relay board using control logic driven by input from a ZWay+openLuup-connected multi-sensor's temperature measurement, no problem. You configure any number of "controllers"; each instance announces what devices it has in inventory, and what attributes and capabilities they have. A controller can be an interface to Vera/openLuup, or Hass, or Hubitat, or just an HTTP-based element that fetches weather from OpenWeatherMap, or an interface to your EVL3/4-connect alarm panel, etc. It is an interface that simply says "these are the objects I have and this is what they know and do". So any device could be supported by a plugin in your Vera/openLuup/Hass/HE/other HA controller, or it could come from a dedicated controller crafted just for that device. For example, it currently supports Sonos through the Vera/openLuup plugin, but I (or someone) could write a dedicated Sonos controller that talks directly to the Sonos zones on the network and bypasses the plugin, maybe even uses their new API rather than UPnP. Controllers have a strictly defined behavior/contract, with the intention that others can develop controllers as well. This aspect is making MSR grow legs, a bit... it's really turning into a home automation controller all on its own. I foresee an ecosystem of available add-on controllers for every manner of device in future. This gives you the flexibility to determine what best supports the products you use; for example, if support for a particular Fibaro or Zooz device in Vera/eZLO is lacking/buggy (no--say it's not so!), you can instead include it on your openLuup+ZWay, Hass, or HE controller where the support is better, and MSR can find it there. But when creating your rules and activities, you don't have to know or care where that device lives. To the maximum extent possible, I am also keeping an architecture and implementation in which system objects (devices, groups, scenes, etc.) are entirely overridable and creatable through configuration. If you have a device type on Vera/openLuup that MSR doesn't natively support, you should be able to just go to configuration and say "this device type, or even this specific device, has these capabilities and these attributes". If a capability doesn't exist, you can create it locally immediately. Up and running in five minutes or less (modulo the first-time learning curve, of course). And for all of this, you should be able to contribute the configuration to the community if you wish (or find configurations/capabilities others have done and apply them). And of course, whatever to create/train is available both in the Reactor part and the Dashboard part.

I've focused mostly on the rules and reactions part of MSR for several weeks, and it lives and breathes now. Although algorithmically it shares ideas with Vera/openLuup Reactor, it is an entirely new code base (and not Lua). Huge strides have been made quickly, but of course, there are a lot of "TBD" comments in the code, and I'm sure no shortage of crashes in boundary conditions from things like unfinished input validation and so on. It needs combing out, some deep code reviews (which I prefer to do on paper), and backporting of some evolution of the evolved hardware abstraction to the Dashboard. There's plenty to do. But, with the freedom of creating the environment rather than working in someone else's, it's much faster and easier, and I'm really pleased with acceleration towards something usable over the last month. I'm about ready to cut over my own home's automations to it. There is nothing like the pressure of pleasing my "driving coach" to make sure I get things working well, and quickly.

@toggledbits Wow, sounds really exciting!

Today I use Homewave on my iPhone and on iPad, this sounds like the next step up towards a real dashboard.//ArcherS

Install Grafana on Raspberry Pi | Grafana Labs

Install Grafana on Raspberry Pi | Grafana Labs